The 50-year-old computing principle is finally coming to heads with the realities of physics and economics.

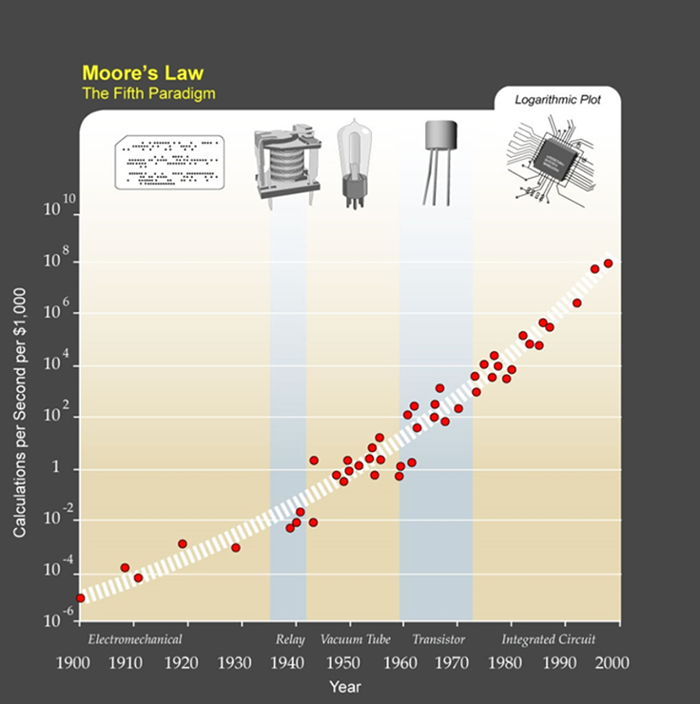

Moore’s law states that the number of transistors on a microprocessor chip will double every two years or so — which has generally meant that the chip’s performance will, too. The exponential improvement that the law describes transformed the first crude home computers of the 1970s into present day smart phones while inversely reducing the cost per chip.

Copyright: Ray Kurzweil, U.S. Inventor and futurist

This exponential growth of computing capacity was matched by the exponential demand of new computer applications coupled with the introduction of new devices such as smart phones to the mass market.

However the silicon based production of chips is finally reaching its limits. One problem is the heat that is generated when more and more silicon circuitry is jammed into the same small area. Some even more fundamental limits such as the miniaturization of circuits is less than a decade away. Top-of-the-line microprocessors currently have circuit features that are around 14 nanometers across, smaller than most viruses. Reducing this further for example to 3 nanometers is likely to introduce quantum uncertainties, making the chips unreliable.

To the chip makers the end of Moore’s law is not just a technical issue; it is also an economic one. Some companies, notably Intel, are still trying to shrink the chips but every time the scale is halved, manufacturers need a whole new generation of ever more precise photolithography machines. Building a new production line today requires an investment typically measured in many billions of dollars — something only a handful of companies can afford.

In summary it is foreseeable that the scaling effects which have driven the silicon based production over the last 50 years will come to an end.

At the same time there is absolutely no end in sight when one looks at the market demand for new computer devices and applications. As this demand has grown exponentially in parallel with Moore’s law in the past it will continue to do so or even accelerate.

Key drivers to this are for example:

- embedded smart sensors that comprise the ‘Internet of things’

- wearables with a minimum of power consumption to handle complex communication tasks

- artificial intelligence agents driven by neural networks, capable of handling complex machine learning applications

- cloud-computing and the processing of huge amounts of unstructured data for analytical purposes

This said it is obvious that the entire semi-conductor industry is making huge strides to keep up with the potential of exponential growth.

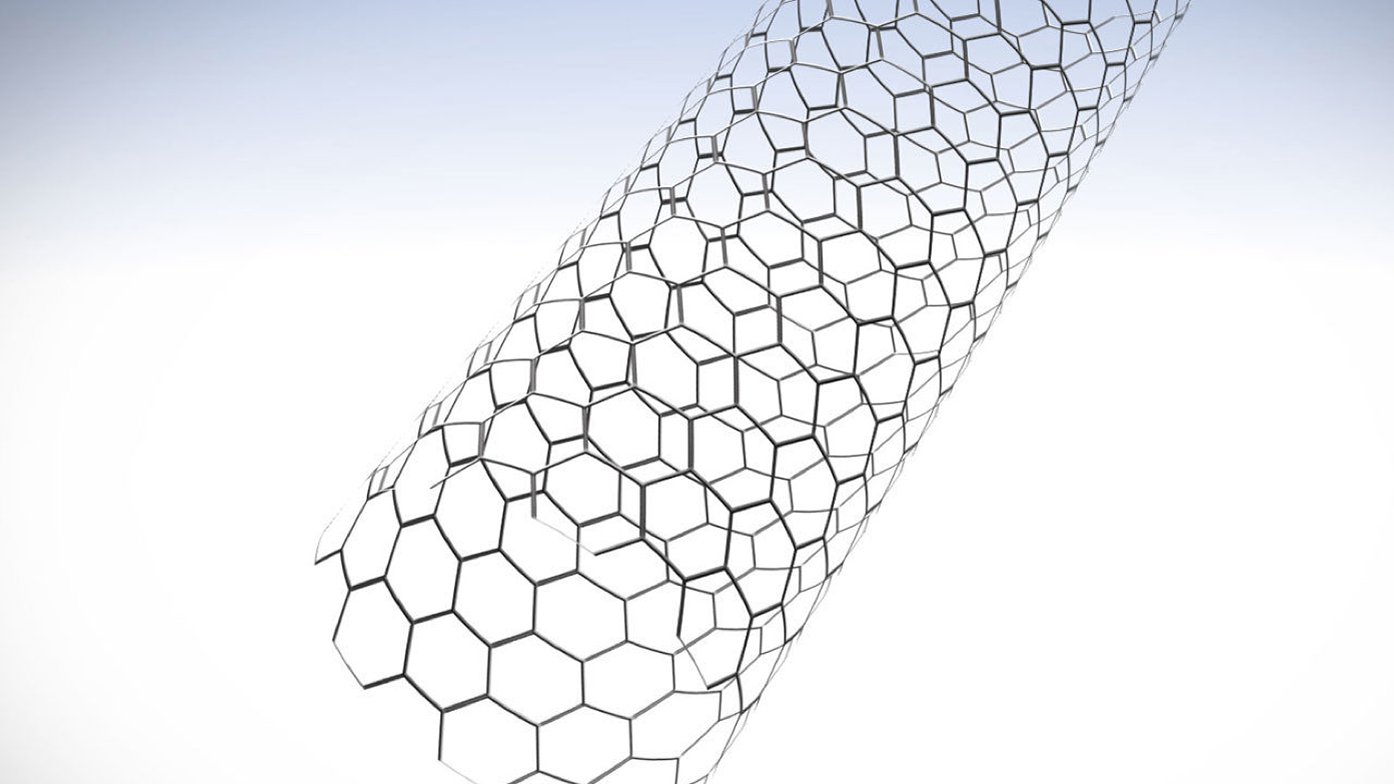

In an announcement made in October 2015, IBM’s Research division stated it has discovered a way to replace silicon semiconductors with carbon nanotube transistors, an innovation that IBM believes will dramatically improve chip performance and get the industry past the limits of Moore’s law.

IBM said its researchers successfully shrunk transistor contacts in a way that didn’t limit the power of carbon nanotube devices. The result, the company said, could be smaller and faster computer chips that significantly surpass what’s possible with today’s silicon semiconductors.

[Image: courtesy of IBM Research]

The IBM researchers have shown that carbon nanotube transistors are capable of working as switches at widths of 10,000 times thinner than a human hair, and less than half the size of the most advanced silicon technology. Incorporating carbon nanotubes into semiconductor devices will result in smaller chips with greater performance and lower power consumption.

Wilfried Haensch, who leads IBM Research’s nanotube project, states that they can make the transistors as small as necessary, which is a big step toward the company’s goal of having carbon nanotube technology ready for mass production by 2020. All told, IBM believes, the new research is jump-starting the move to a post-silicon future, and paying off a $3 billion investment in chip research and development the company had announced in 2014.

In addition to the efforts to replace silicon based chips with carbon nanotube designs, other initiatives are on the way to overcome the limits of Moore’s law.

One such effort is the development of a quantum computer by the Canadian Company ‘D-Wave Systems’ . They claim to have developed the first commercially available quantum computer with customers like Lokheed-Martin, Google and NASA.

Image courtesy of D-Wave Systems

Rather than store information as 0s or 1s as conventional computers do, a quantum computer uses qubits – which can be a 1 or a 0 or both at the same time. This “quantum superposition”, along with the quantum effects of entanglement and quantum tunneling, enable quantum computers to consider and manipulate all combinations of bits simultaneously, making quantum computation powerful and fast. A team of computer scientists and physicists working for Google posted recently a study containing a very bold claim: The quantum computer Google had purchased from Canadian start-up D-Wave systems in 2013 is in fact performing quantum computations as advertised.

There is considerable controversy about the economic viability of quantum computing operating at minus 273°C, however many scientist agree that the concept is valuable for very specific computer applications such as weather forecasting , network optimization or machine learning.

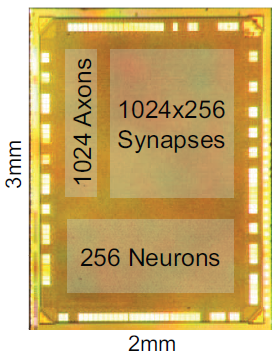

Another effort to provide more powerful systems is IBM’s development of the new SyNAPSE Chip.

Introduced in August 2014 by scientists from IBM, this first neurosynaptic computer chip delivers an unprecedented scale of one million programmable neurons with 256 million programmable synapses to achieve 46 billion synaptic operations per second per watt.

There is a huge disparity between the human brain’s cognitive capability and ultra-low power consumption when compared to today’s computers. To bridge the divide, IBM scientists created something that didn’t previously exist—an entirely new neuroscience-inspired scalable and efficient computer architecture that breaks path with the prevailing von Neumann architecture used almost universally since 1946.

With 5.4 billion transistors, this fully functional and production-scale chip is currently one of the largest CMOS chips ever built, yet, while running at real time, it consumes a minuscule 70mW—orders of magnitude less power than a modern microprocessor. A neurosynaptic supercomputer the size of a postage stamp that runs on the energy equivalent of a hearing-aid battery, this technology could transform science, technology, business, government, and society by enabling vision, audition, and multi-sensory applications.

In summary, as Moore’s law of exponential growth is coming to an end due to the limitations of silicon based chip design, it will be renewed by chips incorporating new materials and architectures, possibly outpacing the speed of change we have experienced in the last 50 years.

This development will be largely fueled by Artificial Intelligence applications and its handling and analysis of huge data sets to support the Singularity-Ecosystem.

Even though most people are skeptical about the Singularity and others believe in it and are amazed at how quickly it’ll come, I happen to feel it’s taking FOREVER to get there! I feel the pace of innovation, despite being super fast is ridiculously slow for what I would want. Do you think that quantum computers or anything else will accelerate Moore’s Law considerably in the next few years? It seems odd to me that although we understand the benefits of this super fast exponential growth we do nothing to try to accelerate it. Just imagine where humanity would be if we were, say, just 20 years ahead of schedule by now. Probably hunger would have been eliminated already, just to name a few things.

So I ask, WHAT, if anything, might make this go faster than it already is?