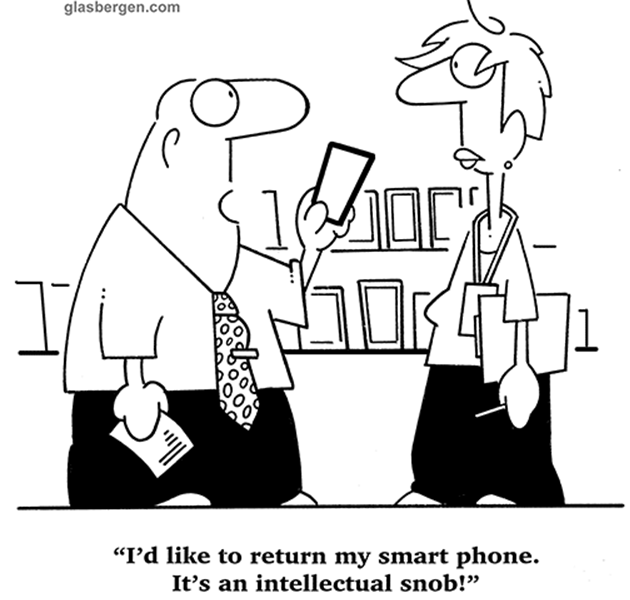

Picture Credit: Randy Glasbergen

Introduction

Imagine that at some time in the not-too-distant future, you buy a smartphone that comes bundled with a personal digital assistant (PDA). You assign a sympathetic female voice of your choice to the PDA and give it access to all of your emails, social media accounts, calendar, photo album, contacts, and other information of your digital life. She (the PDA) knows you better than your mother, your soon-to-be ex-wife or your friends. Her language is flawless; you have endless conversations about daily events; she gets your jokes. She is the last voice you hear before you drift off to sleep and the first upon awakening. Occasionally, you wonder whether she truly reciprocates your feelings and whether she is even capable of experiencing anything at all. But the warm, husky tone of her voice overcomes these existential doubts. Eventually your feelings cool off after you realize she is carrying on equally intimate conversations with thousands of other customers.

This scenario is the plot of HER, a movie produced 2013 in which Theodore Twombly falls in love with the software PDA Samantha. This fictional scenario will step by step become reality on various human issues. Deep machine learning, speech recognition, emotion and body sensing have dramatically progressed, empowering voice activated PDAs such as Amazon’s Alexa, Apple’s Siri, Google’s Now, and Microsoft’s Cortana. These virtual assistants will continue to improve until they become hard to distinguish from real people, except that they’ll be endowed with perfect recall, poise, and patience—unlike any living human being.

A first step: Virtual assistants to support the therapy of mental disorders

Constantly rising costs are a significant factor driving the automation of health care services. IBM in corporation with several hospital institutions is providing diagnostic services to doctors with its Watson AI-services platform. Plowing through thousands of health care records and correlating analytical information with AI-technology has proven to be highly effective for doctors to diagnose and treat illnesses. As part of this trend, new services are offered that provide a direct personal link to a virtual health care assistant or coach.

There are a few applications available today that aim to help users manage their mental state, from meditation apps to virtual therapeutic support platforms. Many apps have little ongoing scientific research in place to back up their claim of improving one’s mental health. However, a new chatbot called Woebot, which tries to help people with depression and other mental disorders through on-line education and mood tracking, has some research credibility to back it up. Created by the former Stanford Medical School lecturer and clinical research psychologist Alison Darcy, Woebot–after a randomized controlled trial–showed that participants who used the bot over a period of two weeks had both statistically and clinically significant reductions of depression as compared to students studying e-books on the subject of depression published by the National Institute of Mental Health. Even though the sample size for the study was small–about 70 young adults who self-identified as having depression or anxiety–the interactive bot was able to reduce symptoms in a meaningful way.

Woebot is based on cognitive behavioral therapy (CBT), which was first introduced in the late 1970s and has become the most rigorously tested psychological model, in comparison to Freud’s method of psychotherapy. It is essentially the opposite of conventional psychotherapy: instead of talking about your childhood, CBT is based on the idea that it’s not events themselves that are upsetting, but rather how those events are interpreted. “It’s not one of those treatments where you come in and talk about your mom,” Darcy says. “It’s about what you’re doing on a day-to-day level that’s undermining your happiness.” Woebot is built using a combination of natural language processing, therapeutic expertise, excellent writing with a sense of humor to create the experience of a therapeutic conversation for all of the people that use him. After a free, two-week trial, Woebot costs $39 a month. Alison Darcy hopes that the service offered by her company Woebot Labs, will help combat the rising numbers of people with depression and anxiety. “That’s why we wanted to create something that’s truly scalable to really bring high-quality psychotherapy to the masses.”

The next step: adding AI to provide personal development as virtual coach

An extended vision supporting personal development in problem areas beyond depression or sleep deprivation is based on the 24-hour recording of all our daily activities including facial-, motion- and body-sensing, thereby creating a digital copy of our decision- and behavioral-patterns. A virtual coach or therapist can use this information to support us in handling our personal problems or assisting us in evaluating life advancing opportunities.

One of the first products to enter this rapidly growing market is PocketConfidant, a personal and virtual coach assistant, designed to support you anytime, anywhere. The product’s vision is to find positive, personally reinforcing thoughts when things feel difficult or challenging. Self-pacing and self-directed, PocketConfidant does not provide diagnosis or advice but guides the user to find his own solutions with no medical counsel required or provided. It is meant to be a tool to support individuals going through personal and professional or academic transitions helping them to develop the competencies they need to enhance their critical thinking and problem solving skills. A deep questioning cycle helps the user to better understand the issues and work involved towards a desired outcome and action plan. Asking the “right question at the right time” facilitates decision-making. Interaction with the virtual coach continually develops effective coping skills, moving the user towards more resourceful states to handle problems or opportunities.

As systems take on new ‘therapy’ and coaching functions and gather an increasing amount of personal data about us, the issue of privacy will become even more pressing than it already is today. We have to carefully monitor the effectiveness of the newly established EC privacy laws in dealing with confidential personal data. Depending on how advanced these AI systems are, they will not only gather factual data such as our workout or eating patterns, but also gain intimate insights into our psyche and our problems, desires, dreams, and fears. It is obvious that corporations would be keen on getting their hands on such in-depth consumer data to further personalize their marketing efforts. As digital services get adopted in the healthcare realm, it will thus become increasingly important to set clear boundaries regarding which information third parties are allowed to have access to. Connected to the issue of corporate data mining is the danger of data theft. Since virtual psychotherapists and coaches have such a wealth of information about us, they could be a profitable target for hackers.

Addressing this privacy issue is Illuminoo , a Dutch start-up company that integrates state-of-the-art deep learning AI with neural-symbolic reasoning to build reflective intelligent agents for personal development. They have created a new form of self-learning AI, which allows users to gain insights about their own personal behavior, using real-time and multi-sensory behavior modelling, interaction and knowledge sharing. Their service stores data locally on one’s own personal device. There is no need to upload personal data to the cloud.

Conclusion

In the future it might become common to have one or several virtual companions ‘living’ in our smart phones. “We are at the beginning of a new era of intelligent devices”, says Vlad Sejnoha, CTO of Nuance, the speech recognition company that licensed its voice engine to Apple. “Users really resonate with Siri – they clearly have a real emotional connection with a human-like conversational device interfaces. We believe that the bulk of mobile devices going forward will be voice-enabled.”

Interacting with ‘humanized’ technology in the context of therapy and coaching will turn our devices into ‘identity accessories’: they will become tools to actively guide our behavior and identity based on goals we provide. “As intelligent systems get better at understanding and reacting to us, our relationship with devices is bound to become stronger over time,” says Sejnoha. If our devices become ‘alive’ via AI technology, acting as a mediator for self-discovery and self-realization, it might have far-reaching consequences for how we relate to these devices. A new category of ‘living’ technology will emerge, taking on new roles as companion, confidant, and ‘friend’.

The availability of virtual assistants that interact on issues we so far considered uniquely human will raise profound scientific, psychological, philosophical, and ethical questions. Even though AI systems are no substitute for interaction with a real human, they do have the potential to improve our quality of life. Especially at a time when proactivity and constant self-development are becoming a precondition for being successful, virtual therapy and coaching can be expected to continue gaining popularity. If doctors start using therapy apps as part of medical treatment, it will also raise new questions regarding their accreditation. It might become necessary to create new official certifications for virtual therapy services to make sure they meet certain quality requirements.

One Comment