Body sensing Credit: zayedhicare.ae

Body sensing Credit: zayedhicare.ae

Introduction

Embodied cognition, the idea that the mind is not only connected to the body but that the body influences the mind as well, is a rather new concept in cognitive science. In sharp contrast is dualism, a theory famously expounded by Rene Descartes in the 17th century when he claimed that “there is a great difference between mind and body, inasmuch as body is by nature always divisible, and the mind is entirely indivisible… the mind or soul of man is entirely different from the body”. For over 400 years, Western thought about what constitutes knowing has been overshadowed by Descartes’ mind-body dualism, expressed in his oft-quoted maxim: “I think, therefore I am.” Thinking (cognition) should be free of the distracting and potentially deceptive cues the senses (the body) might provide. While this discussion continues on a philosophical level, a growing community of neuroscientists such as Antonio Damasio, author of ‘Descartes’ Error’, first published in 1994, are challenging duality, separating mind and body as foundation of human existence. Likewise, embodiment has also become an issue in AI research, exploring the connection between behaviour and brain functionality.

Sensing as key resource of embodiment and issues about ethics

Touch, sight, hearing, smell and taste define our primary inventory of senses for experiencing the outside world. The organs associated with each sense provide information to the brain to help us understand and perceive the world around us. In addition, neurologists define at least four more senses based on sensory cells that respond to a specific physical phenomenon. These include heat, pain, balance and locality (internal GPS). Along with the ongoing advances in sensing technology of smart watches, intelligent glasses (spectacles) or headbands equipped with EEG technology, a new generation of sensors records embodied data in real-time. Cloud-services provide AI-algorithms to interpret the data and augment our own embodied perceptions of the outside world, signalling deviations from a predefined norm. For example, individuals with health problems, applying real-time sensing of heart rate, blood-sugar (diabetes) or blood-pressure, can receive early warning information based on the algorithms applied on the data collected. With the possibility of combining embodied information with observational data such as facial expression or body movement, the collection of the corresponding datasets raises many ethical and data-protection issues. Moreover, clustering this data from teams, working within an organisation on specific tasks, raises management control and cultural issues.

From embodiment to learning

Embodiment begins at birth. For the new-born infant, even the simplest act of recognition of an object can be understood only in terms of bodily activity. Embodied cognition can give us an explanation regarding the process through which infants attain spatial knowledge and understanding. According to former Yale University Professor Eleanor Gibson’s theory, exploration itself takes an essential place in the cognitive development. For example, infants explore whatever is in their vicinity by seeing, tasting or touching it before learning to reach to objects nearby. Then, infants learn to crawl, which enables them to seek out objects beyond reaching distance, but also to learn about basic spatial relations between themselves, objects and others including basic understanding of depth and distance. Hence, through exploration, infants get to know the nature of the physical and social world around them. Though adults have more experiential information to draw on, the fundamental process of learning at any age is based on creating and elaborating networks of neural associations. Although our subjective experience of thinking is something we can articulate, all thought originates in the embodied brain’s activity of non-verbal analogical association. Because these analogical associations are the brain’s primary references, everything we come to know and understand, including the most abstract concepts, originated with embodiment. Neuroscience shows that what we call “thought” is the process of a long train of meaning that begins with the body’s responses to external and internal environments. The formation of memory that can be used to articulate and reason emerges at the end of complex, nonconscious embodied processes.

The differences between emotions and feelings and their sensing potential

Neuroscientists tend to distinguish between emotions and feelings. According to Antonio Damasio, emotions describe the brain’s internal body responses to environmental stimuli, while feelings are conscious and subjective perceptions of those changes. Memory traces of any experience always include the associated body-state and these ancient reservoirs of emotional reaction continue to invisibly affect our responses in the present. The organism’s most basic survival decision—what to move toward and what to move away from—so far is an emotional reaction, made under short timing restraints. Emotions and feelings are two sides of the same coin yet highly interconnected:

Emotions are lower-level responses creating biochemical reactions in one’s body. They originally helped our species survive by producing quick reactions to threat, reward, and everything in between within their environments. Emotions precede feelings, are physical, and instinctual. Because they are physical, they can be objectively measured by blood flow, brain activity, facial expressions, body language and voice.

Feelings are mental associations and reactions to emotions, influenced by personal experience, beliefs, and memories. A feeling is the mental portrayal of what is going on in one’s body when one has an emotion. They are the sequence of having an emotion. Feelings involve cognitive input, usually subconscious, and cannot be measured precisely. Just thinking about something threatening can trigger an emotional fear response. While individual emotions are temporary, the feelings they evoke may persist and grow over a lifetime.

From embodiment to award-focused reinforcement learning

Research shows that embodiment and learning correlate in achieving cognitive intelligence. Going one step further, the hypothesis that the promise of awards will enhance this learning process, is stipulated by a research paper recently published by DeepMind, Alphabets’ AI research unit. Titled “Reward is Enough,” the paper, draws inspiration from studying the evolution of natural intelligence as well as drawing lessons from recent achievements in artificial intelligence. The authors suggest that reward maximization and trial-and-error experiences are enough to develop behavior that exhibits the kind of abilities associated with intelligence. From this they conclude that reinforcement learning, a concept of AI that is based on reward maximization, can lead to the development of cognitive artificial general intelligence (AGI). One landmark in this ongoing development of reinforcement learning was AlphaGo Zero (followed by AlphaZero), a combination of hardware and software, beating Lee Sedol as one of the world’s best players at the boardgame of Go. In their new paper, the AI researchers also address the issue of embodiment. So far, observing the behaviour of animals provides the first clues of analogies to human’s reward-focused behaviour. Object recognition enables animals to detect food, prey, friends, and threats, or find paths, shelters, and perches. Image segmentation enables them to tell the difference between different objects and avoid fatal mistakes such as running off a cliff or falling off a branch. Touch, taste, and smell also give the animal the advantage of having a richer sensory experience of the habitat and a greater chance of survival in dangerous environments. Rewards and environments also shape innate and learned knowledge in animals. For instance, hostile habitats ruled by predator animals such as lions and cheetahs reward ruminant species that have the innate knowledge to run away from threats since birth. Meanwhile, animals are also rewarded for their power to learn specific knowledge of their habitats, such as where to find food and shelter.

Robotics as a testbed for sensor-based reinforcement learning

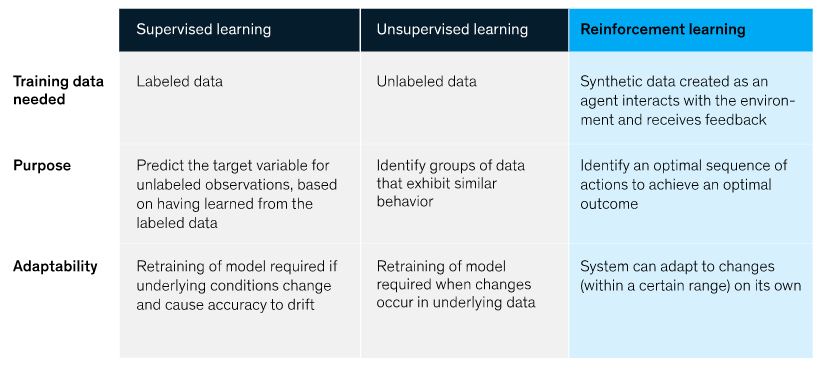

Reinforcement learning differs significantly from the prevailing, traditional AI-concepts as depicted by a recently published chart from the McKinsey organisation:

Next to mastering games like Go and thereby outperforming humans, robots represent an ideal testing ground for reinforcement learning, exploring the impact of reward- driven behaviour. Intelligent machines with sensors and actuators interacting with their environment are excellent tools to help us understand the embodied, embedded and extended nature of cognition. In contrast to methods from empirical sciences to study cognition, robots can be freely manipulated and virtually all key variables of their embodiment and control programs can be systematically varied. Hence, different reward strategies can be applied and tested based on reinforcement learning algorithms. Robotic based research is not only a productive path to deepening our understanding of cognition, but robots can also strongly benefit from human-like cognition in order to become more autonomous, robust and safe as discussed in a 2018 research paper Robots as Powerful Allies for the Study of Embodied Cognition from the Bottom Up (arxiv.org) . Autonomous driving represents probably the most developed and heavily invested application of sensor-based imbedded intelligence. However, millions of miles driven by test vehicles have proved that mimicking driver’s behaviour reacting to unforeseen events goes beyond the sensor-based computational capacity of an autonomous vehicle. Only if the environmental conditions and driving-rules are exactly laid-out, such as the provisioning of special lanes for long distance trucking, does autonomous driving stand a chance to recover the huge investments made so far.

Conclusion

While sensor-based reinforcement learning is likely to match or augment human’s cognitive intelligence, there are other intelligences which are beyond cognition, supporting the fact that across the entire spectrum of human’s intellectual and emotional capacity, humans cannot be replaced by intelligent machines. Hence, the challenge with which we are faced is to provide continuous education at all age-levels, taking advantage of the AI-tools available, creating knowledge to augment our learning capacity and to support us in better decision-making and problem solving with rewards as stipulated by the concept of reinforcement learning.